Abstract

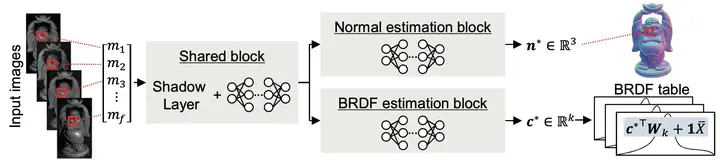

This paper presents a photometric stereo method based on deep learning. One of the major difficulties in photometric stereo is designing an appropriate reflectance model that is both capable of representing real-world reflectances and computationally tractable for deriving surface normal. Unlike previous photometric stereo methods that rely on a simplified parametric image formation model, such as the Lambert’s model, the proposed method aims at establishing a flexible mapping between complex reflectance observations and surface normal using a deep neural network. In addition, the proposed method predicts the reflectance, which allows us to understand surface materials and to render the scene under arbitrary lighting conditions. As a result, we propose a deep photometric stereo network (DPSN) that takes reflectance observations under varying light directions and infers the surface normal and reflectance in a per-pixel manner. To make the DPSN applicable to real-world scenes, a dataset of measured BRDFs (MERL BRDF dataset) has been used for training the network. Evaluation using simulation and real-world scenes shows the effectiveness of the proposed approach in estimating both surface normal and reflectances.