Use of Machine Learning by Non-Expert DHH People: Technological Understanding and Sound Perception

Abstract

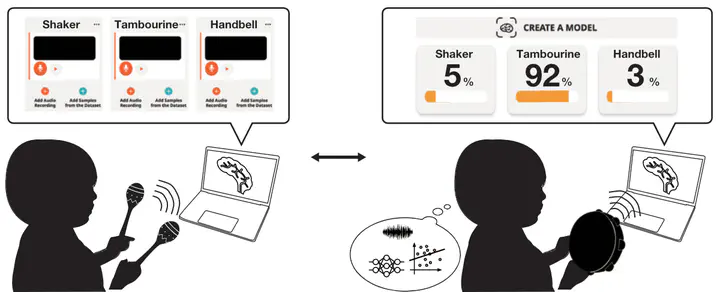

Recent advances in machine learning demonstrated its potential in accessibility applications. However, recognition models and their application scenarios are often defined by machine learning (ML) experts and cannot fully capture users’ diverse demands with disabilities. In order to open up the full potential of ML for accessibility applications, we have to bridge the gap for non-expert people doubly caused by the technological understanding and their disabilities. In this work, we investigate how non-expert deaf and hard-of-hearing (DHH) people understand ML technologies and design ML-based sound recognition systems. We conduct a workshop study consisting of an ML lecture and an interactive learning session using a sound recognition system. Through observations during the workshop and semi-structured interviews, we clarify that non-expert DHH people start to overcome the knowledge gap. They could obtain a more detailed understanding of ML technology and how to use sounds to train ML models.